Reaffirming the importance of the normal distribution, the central limit theorem is one of the key concepts in statistics.

So what exactly is it? How does it translate? And above all, how is it applied? That’s what we’ll be looking at in this article.

The central limit theorem and convergence to the normal distribution

The central limit theorem establishes the convergence of a sequence of variables defined on the same space to a normal distribution.

In other words: the more independent and identically distributed random variables are added together, the closer the probability distribution of the new variable will be to a normal distribution (also known as a Gaussian curve or a bell curve).

And this applies to all types of probability distributions for a random event, such as the continuous uniform distribution, the triangular distribution, the exponential distribution and so on.

As soon as this event is repeated often enough, its mean gradually converges to a normal distribution. Even if at first (after adding up the variables once or twice), the distribution was not normal, it gradually becomes so as the addition of variables increases.

It is precisely this principle that enables statisticians to assert that the normal law is the law of natural phenomena.

A little history: The central limit theorem first came into being in 1718, when De Moivre demonstrated the importance of the normal law.

But it was not until the work of Pierre-Simon de Laplace in 1812 that this theorem was truly applied to special cases. It wasn’t until 1920 and George Pólya’s work (“Sur le théorème central du calcul probabiliste, parmi ceux ayant rapport à la notion de limite, et le problème des moments”) that it was given the name Théorème central limite.

The mathematical application of the central limit theorem

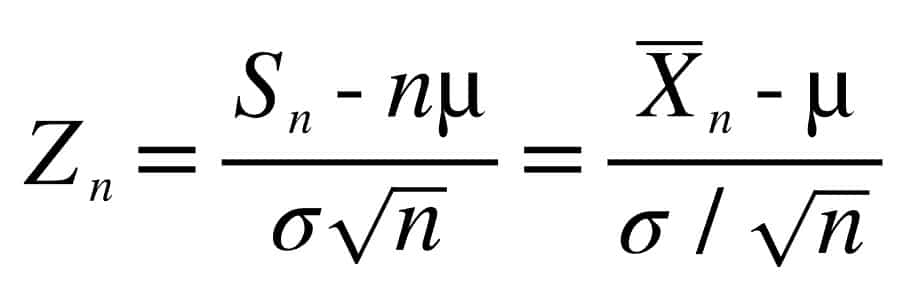

To calculate the central limit theorem, consider :

- X1, X2, … as a sequence of independent, identically distributed real random variables.

- σ ≠ 0: the expectation μ and standard deviation σ of the D distribution are not infinite.

- Sn = X1+X2+X3 … as the sum of all random variables.

- nμ: corresponds to the expectation of Sn

- σ√n: corresponds to the standard deviation of Sn

The central limit theorem states:

Here, Zn’s expectation is 0 and its standard deviation is 1. In other words, the variable is centered and reduced.

Zntends towards the normal distribution as ntends towards infinity.

So for any real z :

lim n→∞ P (Zn ≤ z) = Φ(z)

where : Φ is the distribution function of Ν(0,1)

Example of application of the central limit theorem

To help you better understand the central limit theorem, here’s a concrete example.

Marine and Julien are playing dice. Every time they hit even, they win €1, and every time they hit odd, they lose €1. In this case, the even and odd numbers are identically distributed random variables, since Marine and Julien have as much chance of winning as losing. In the first few games, the balance may tip more towards even numbers (and therefore wins) or odd numbers (and therefore loses). But as the game progresses, their probability of winning or losing is close to 0.

This central limit theorem can be found in many everyday situations. For example, the size and weight of individuals within a population, the distribution of salaries within a company, etc. The average of these results always ends up resembling a bell curve as the population sample is enlarged,

Because of the wide variety of these applications, the central limit theorem is an essential statistical tool for data scientists, enabling them to model Machine Learning models. In addition to data science tools, it is therefore essential to master mathematical concepts. It’s precisely for this reason that training is essential. Find out more about our programs.

Things to remember

- Identically distributed random variables follow a normal distribution as they are added together.

- With this statement, the central limit theorem underlines the importance of the normal distribution.

- Moreover, this theorem applies to a wide variety of hypotheses, from a game of chance to determining the average wage or the size of a population.