Theano is a library that allows solving problems involving large datasets through multi-dimensional arrays. Below, you will find more details on how to accelerate your research using Theano: etymology, creation, features, and its inclusion in the Python pipeline...

Theano is primarily the name of a Greek mathematician and philosopher from the 6th century BCE, known as the exemplary woman of Pythagoras.

What is Theano?

Returning to our main concern, Theano is a Python library developed by Yoshua Bengio at the University of Montreal in 2007. It is based on and written in Python. Theano utilizes multi-dimensional arrays to define, optimize, and evaluate mathematical expressions, especially in the context of Deep Learning or problems involving a substantial amount of data. It is notably well-received in academic research and development.

How do you code with Theano?

- It is installed using pip install Theano from the terminal.

- Theano’s “tensor” sub-library is often abbreviated to T.

- A Theano function and variables are declared as follows:

As you can see, the Theano function requires an array of variables [a, b] and a predefined variable c as the result, which is an operation (in this case, addition) of a and b. The displayed result when the function is called is 10 in this case.

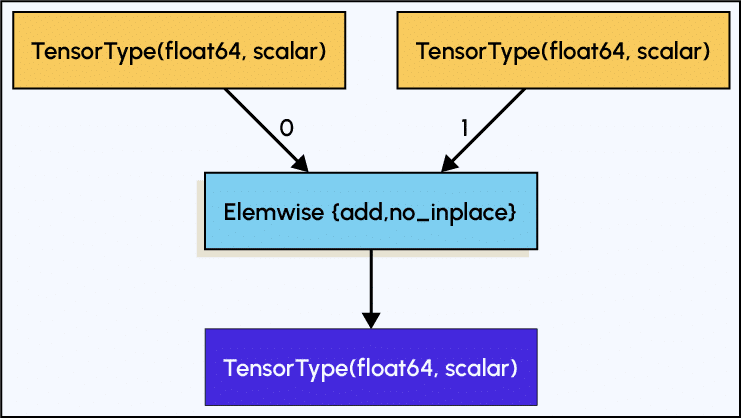

The graph of the function is shown below, generated by a Theano command, which we will discuss in the next paragraph. It’s evident that the first two blocks (in green) correspond to the two variables a and b, which are of type float and defined as tensor.dscalar().

The middle block (in red) represents the Theano function defined by c (add for addition). The blue block corresponds to the result.

What makes it special?

From a functional and performance perspective, this library:

1. Creates an expression that is evaluated by a Theano function.

2. Allows for differentiation of multiple variables, matrix computations, and gradient calculations with ease.

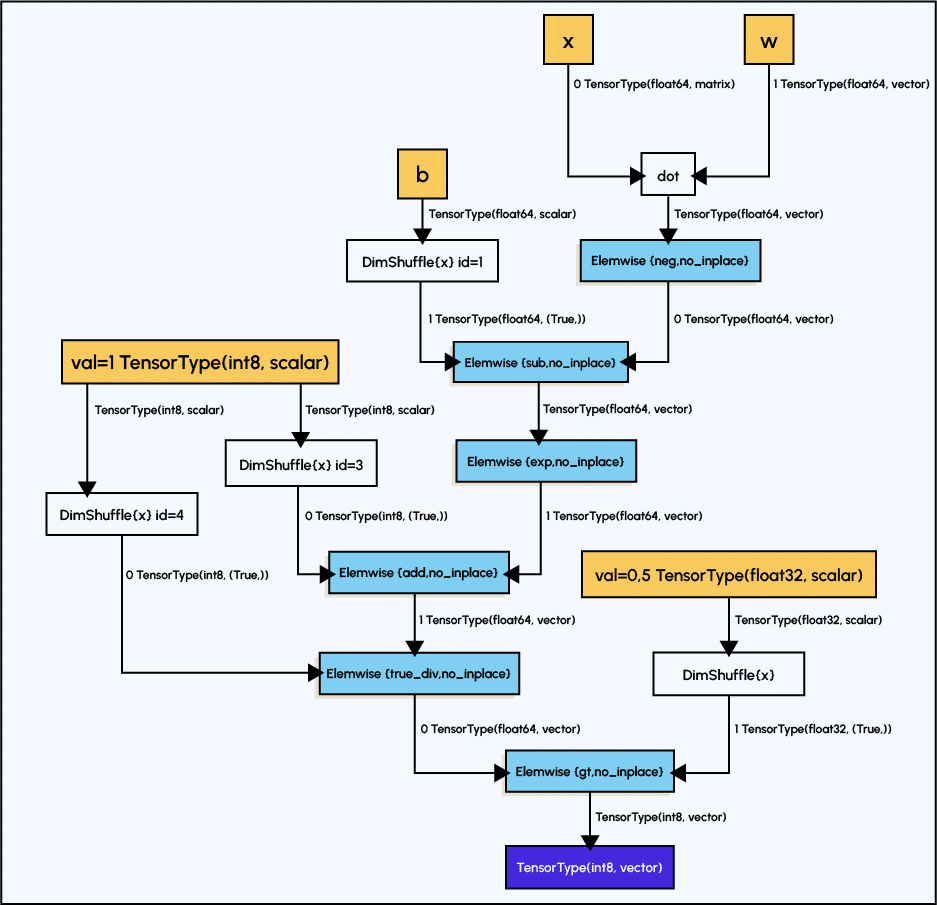

3. Automatically generates symbolic graphs that can be visualized using a library and the pydotprint function.

These graphs can be highly complex, and Theano applies many advanced optimization techniques (algebra and compilation) to them.

These techniques consider the overall graph to generate highly efficient code, especially in the context of repetitive calculations.

It doesn’t rely solely on local optimization techniques but can also reorganize calculations (order, repetitions) to make them faster, similar to different algorithms for searching a word in a text that can vary in speed (O(n), O(n²)).

The Theano function acts as a bridge to inject compiled C code into the runtime (the software responsible for program execution).

Below, you can see an example of a more complex graph than the previous one. Here, there is an additional element, the white blocks, which represent predefined functions from other libraries, for example:

The calculations are up to 140 times faster on a GPU compared to C on a CPU (which supports only the float32 format) or pure Python. Dynamic code generation in C enables faster evaluation of expressions.

A few additional points:

1. It is fast and stable when dealing with calculations of the type log(1+exp(x)) for large values of x.

2. It includes self-testing tools for diagnosing bugs and issues.

3. It can be adapted for use with convolutional networks and recurrent networks.

How is it linked to other libraries?

- Theano incorporates numpy.arrays into its internal workings. It is a library sometimes invoked in the internal operations of Keras with TensorFlow (for machine learning models).

- Theano can serve as a backend in Keras to perform all internal operations. TensorFlow, which serves a similar purpose, can also be found in this context.

Conclusion

Theano is, therefore, a tool that optimizes and accelerates your computations in Deep Learning or Machine Learning based on a graph system that encompasses all computation steps. Despite its age, it is not outdated and deserves a place on the list of optimization libraries. If you wish to gain expertise in these fields, DataScientest has designed a curriculum for Deep Learning that you can find by clicking on the previous link.